The past 3 plus years we have seen Artificial Intelligence (AI) at the forefront of the news cycle. That started when ChatGPT released their first public model in late 2022. Hype creates awareness which typically results in increased funding and escalates the development cycle. And the hype has helped propel the capabilities of AI beyond what most thought was possible by now. We knew it would happen just not this quickly. The AI hype train is starting to settle so what should we expect for the masses in the next 3 years?

We will start with the more technical aspects of AI that are driving mass market adoptions, while minimizing AI jargon as much as possible, to ground us, then move onto the impact on you and I, the employees and consumers.

Mass market AI adoption comes down to pricing and efficiency. Large corporations and federal agencies have been implementing AI as they have the financial resources or the individuals with skills to handle the AI work. Mass adoption means that most organizations can use AI and it provides a positive ROI.

AI Prices Have Dropped Significantly and Prices Will Continue To Drop

There are three primary ways organizations implement AI models.

- Large Proprietary Model APIs: An individual or organization can use an existing proprietary model like like Claude, Anthropic’s family of AI models or Google’s Gemini suite of models. These are the models that many consumers are aware of as they may be used through their web-based portals like Grok.com or ChatGPT.com. . These models may be accessed through an API as well (programmatic interface) and pricing is based on words (tokens) received and outputted from the AI model. When interacting with ChatGPT.com you can think of a token as a word in your prompt and then the resulting output. The same process occurs when using the API but a fee is incurred. Some services like Google’s Gemiini models provide free API tiers but those have low usage limits used for testing and are not viable for production systems. The larger the prompt and the larger the output the higher the cost when using an AI Model API.

- Use a free base AI model or create a custom model. Very good free base models, typically called “open weight models”, are available (more on those later) and you can use those models and customize them to your needs resulting in a fine-tuned custom model. These models may be implemented in two ways:

- Managed inference APIs: AI ‘inference’ services like Groq.com and Together.ai are available which may be used to run a custom model as well as run open source base models that are already available. The term inference simply refers to the process of running an AI model. Pricing is based on tokens processed (input and output) like the proprietary models above. These Managed inference APIs are cheaper than their large proprietary model counterparts above as they are smaller models that are typically freely available.

- Self Hosted: Install an open weight or custom model on your own environment which is typically a cloud like AWS or Azure on specific hardware called GPUs. In this scenario one pays for the hardware but then the model can be used as often as needed at no additional cost. In this scenario you are paying for the hardware but not for the use of the model.

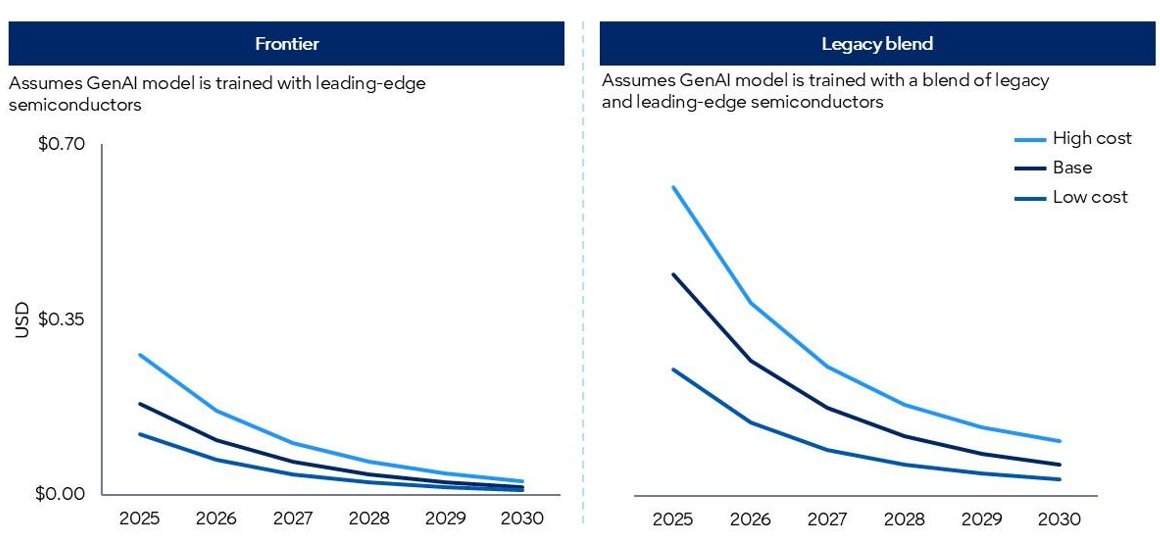

Regardless of the scenario prices have dropped significantly the past three years:

| AI Implementation Method | Examples | Early 2023 Price | Early 2026 Price | 3-Year Drop |

| Large Model APIs | ChatGPT, Gemini, Claude | $20–$37/M tokens | $0.07–$0.40/M tokens | 95%+ |

| Managed Inference APIs | Groq, Together.ai, Fireworks | ~$20/M tokens | $0.03–$0.20/M tokens | 95%+ |

| Self-Hosted On-Demand Cloud | AWS, GCP, Azure GPU | $6–$8/GPU-hr | $2–$4/GPU-hr | ~50% |

In the next three years the Large Model APIs and Managed Inference API prices (top 2 above) are expected to continue to drop significantly, by an additional 75% or more. These are the services with model usage-based pricing. Self-hosted model implementation costs may go up slightly the next three years as those prices are driven by the cost of the hardware (GPUs primarily) but in that scenario full time access to GPU(s) is available and the AI models of the same size are getting much more powerful, inference techniques are becoming more efficient and GPUs are getting more powerful so from a value perspective the self hosted effective pricing will still decrease over time just not as much as the APIs.

| AI Implementation Method | Examples | Early 2026 Price | Early 2029 Price | Estimated 3-Year Price |

| Large Model APIs | ChatGPT, Gemini, Claude | $0.07–$0.40/M tokens | $0.02–$0.10/M tokens | -75% |

| Managed Inference APIs | Groq, Together.ai, Fireworks | $0.03–$0.20/M tokens | $0.01–$0.05/M tokens | -75% |

| Self-Hosted On-Demand Cloud | AWS, GCP, Azure GPU | $2–$4/GPU-hr | $2.25–$4.50/GPU-hr | +10% |

Gartner recently reported that yes AI per token price drops will continue to ‘plunge’ but the continued advanced uses of AI are consuming more tokens (especially Agentic AI) so usage will need to continue to be evaluated and hybrid approaches should be considered.

Why this context and why is this important? Early adopters typically pay a premium but now pricing is getting to a point that enables mass market use. Pricing is not the only factor which will drive the masses to AI. Next we will explain the other major factor – AI model efficiency and portability.

AI Power, Portability and Efficiency

In early 2025 Deepseek made headlines when they released their reasoning model (R1) which performed near as well as the top US large proprietary models like OpenAI at a fraction of the price. How did they do this? Obviously lower hardware and personnel costs in China is one factor but the factor with longer term implications on the industry is increased model performance and efficiency. AI models are becoming much more powerful while the efficiency of implementing the models is increasing significantly.

Deepseek should not be solely credited for this but the release of their model in 2025 highlighted the fact that the AI industry needs to and is moving from simply building the biggest super computers to building models that can run more efficiently and are becoming increasingly more portable. For mass AI adoption to take effect these models need to be usable on as minimal hardware as possible as that is what primarily determines the cost. And that progression is happening.

The bottom line is that in many use cases the AI models are now smart enough and just as much effort is being put into making them more efficient and portable (LoRA is one technique). Mixture-of-Experts (MoE) will be a driving factor in making these models more efficient even as they become more powerful. A MoE model consist of a series of models each focused on certain task(s) so when they are called typically only a portion of the overall model needs to be used thus reducing computing needs and overall token costs.

- Open weight MoE models include Deepseek R1, Qwen 3.5, Llama4 Maverik (Facebook) and Mistral Large 3

- Enterprise / Proprietary-Focused MoE Models include Google Gemini 3.1 Pro and Kimi K2.5 (Moonshot AI API):

It is that increased efficiency as well as reduced cost that will enable mass adoption and we will be there in three years so that cost in most use cases will not be a limiting factor for a positive ROI.

Artificial General Intelligence and the AI Community

The larger AI firms may respond that making the large proprietary models smarter is just as important as efficiency and I would not disagree with that but for mass adoption in the majority of use cases the models are smart enough and efficiency is king as that it is the predominant cost factor now that the earlier adopter phase is behind us.

The larger AI firms are in a race to Artificial General Intelligence (AGI) which is where the AI models can perform human level cognitive tasks across many domains better than the human experts. It is good that this race continues as the learnings and techniques will continue to trickle down to how most organizations and individuals will use AI models and complex functions like medical breakthroughs will greatly benefit from these advances. However, ethical and safety risks need to be considered in this race to AGI.

Not all AI firms and divisions are focused on profit. Others are providing value like AllenAI (AI2), a non-profit which “develops foundational AI research and innovation to deliver real-world impact through large-scale open models, data, robotics, conservation, and beyond”. Non-profit organizations like AllenAI, Federal Agencies, and educational institutions are critical to the evolution of AI to the masses in a cost effective manner. AI originated from the academic field. Funding for these non-profit organizations is critical so we have a balanced AI approach not solely driven by for-profit organizations. .

As an example, AI2 released FlexOLMO last year, an open “Collaborative Model Training.” solution. These are the type of solutions we need to continue to push AI forward to the masses. Not only could this be used to create more powerful and specialized models, it could then potentially be deployed with less computing needs.

The AI community will be the predominant driving force for the next 3 years.

The “AI community” will have the biggest impact on the progression of AI the next 3 years now that we have taken off – impact on ease of AI model development, AI performance and efficiency. A full ecosystem of tools and techniques have popped up, many free or fully open source, that allows individuals and organizations to become well-versed in AI at little cost. It is this community which will push AI for the masses to the next level. The likelihood of one AI firm standing out as head and shoulders above others is now unlikely as the old days of the best proprietary hardware and software gated at one or a few firms is over.

Grok (xAI) delivered a state of the art AI model, equivalent to any other model available at the time, within 15 months of starting. That is not because they had the best and brightest there. I am sure the initial staff at Grok were very good like the other firms. It is because everyone comes from the same AI community and we learn together regardless of where you are employed. For-profit, non-profit, education, government – it is one community pushing us forward. The same reason why Deepseek (a Chinese firm) delivered a top model in 2025. The AI community has no borders.

Many smaller “open weight” models like Mistral and Qwen3 are now less than 12 months behind the large proprietary models (CharGPT, Grok, Gemini,..) and those open source models may be ‘fine-tuned’ to specific needs. Huggingface provides an Open LLM leaderboard that helps in identifying the current highest performing open weight models. In the next 3 years most small to mid sized companies will be able to embed AI into functions where it makes sense at a positive ROI.

Workforce Realignment & Impact on the US Economy

AI has already had a large impact on certain industries (especially IT) and will have an impact on others in the future but predictions like no one will need to work again is not happening at least in my lifetime and if you still believe that then become an electrician. No electricity, no AI.

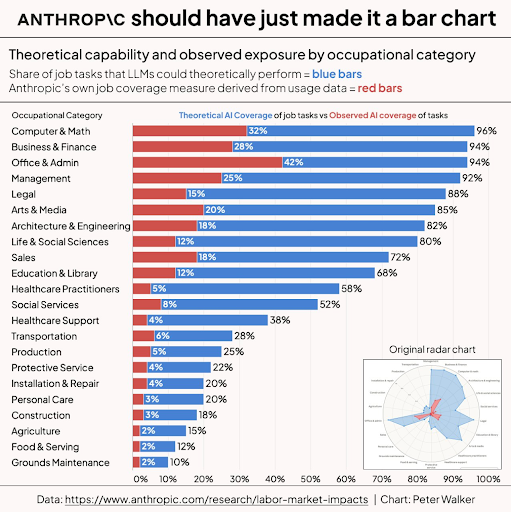

The impact of AI, and level of exposure on jobs, now and the next 3 to 5 years will vary.

The adjustment of human resources has already started in some industries and it has taken its toll and will continue to. An adjustment in corporate mindshift and values is required as well. Executives at public firms are accountable to the shareholders and difficult decisions need to be made but everyone in corporate America needs to understand that this is beyond individuals losing their jobs. If corporate America views AI as only a means to increase profits the wealth inequality gap will dramatically increase and it will significantly hurt those that are newer to the workforce … and make no mistake that wave will eventually flow over to the majority of Americans as if the younger workforce can’t afford to buy a home or invest in a 401K or place money in social security and medicare it will catch up to all but the rich.

Will America shut down no, but “the land of dreams” will become more distant for most. So a word to those who have to make those difficult corporate decisions – perhaps layoff less and set a mandate that everyone needs to evolve quickly which will enable accelerated future growth. The majority of the workforce wants to be challenged and we would all like to see the US continue to be the land of prosperity and dreams well into the future. Ensuring a profitable business for the shareholders is one thing – creating much larger profit margins at the sake of individuals, and longer term the American way of life is short-sided. The same is true for other nations. We are in this global economy together.

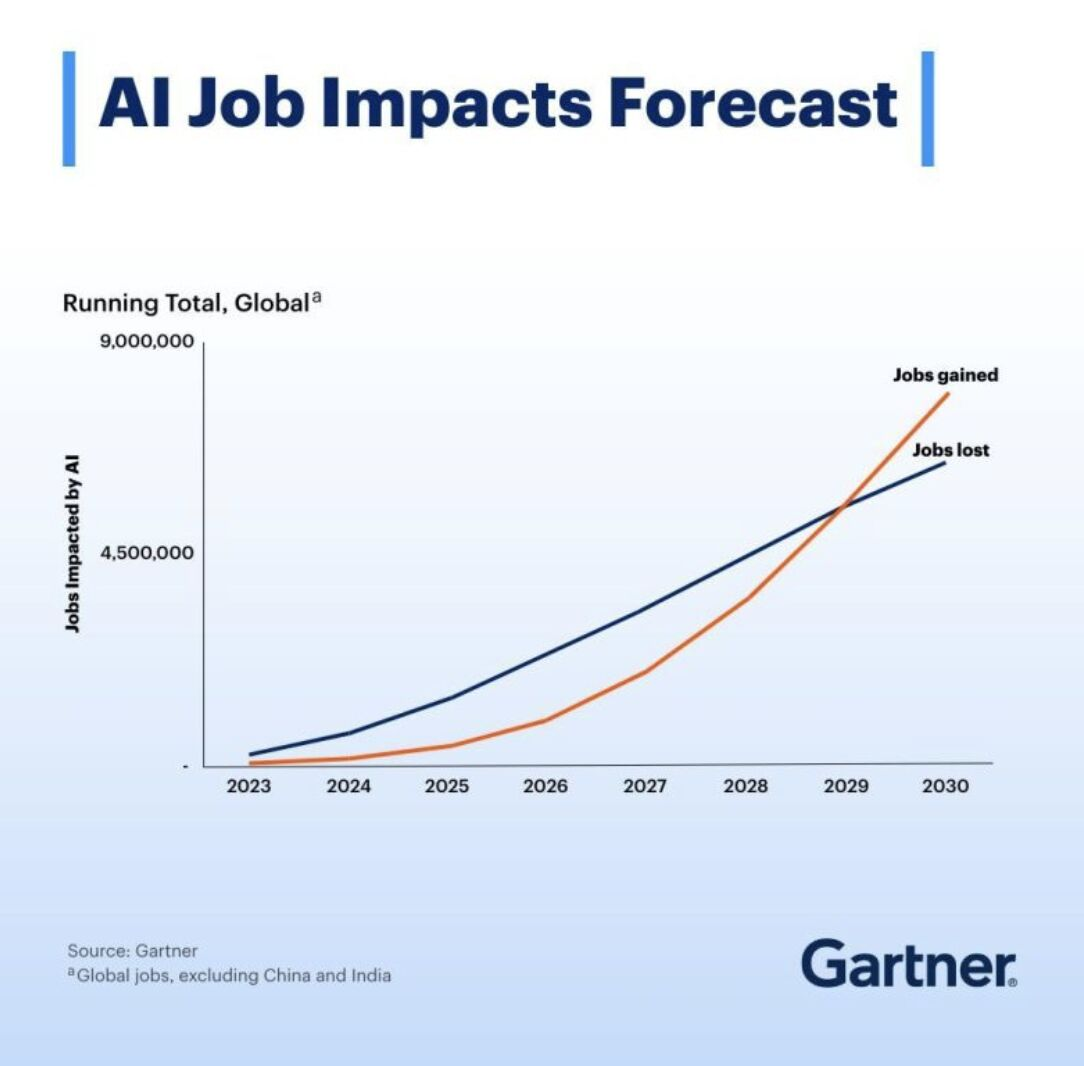

Lets conclude on an optimistic note – AI will enable the world to evolve much quicker and many including Gartner believe that in the long run industries and individuals will evolve thus enabling job growth. When the job loss / job growth inflection point will occur is difficult to pinpoint and a healthy level of skepticism and concern is understandable. Regardless, most of us need to start to prepare and evolve now.

Recommendations

The ongoing AI price reductions and efficiency techniques, which will enable most organizations to adopt AI in a cost effective manner in the next three years, need to continually be evaluated along with impacts on the workforce:

- Organizations new to AI should consider an initial proof of concept. Evaluate AI inference providers (Groq, Together.ai, Fireworks) as it is much easier to use those providers than to build and implement a custom AI solution. Then when the initial system is running evaluate costs and estimate when you should transition to a custom AI system based on potential impact, needs and costs. In some use cases, AI inference providers will be cost effective long term especially with the ongoing price reductions.

- Many of the available ‘open weight’ AI models will work in certain use cases so evaluate a few (Mistral, Llama, Qwen,..) as that will provide a performance baseline to start then if additional performance or customization is required test into custom AI fine-tuned models and RAG systems with your data. Also test the enterprise / proprietary AI models (ChatGPT, Grok, Gemini,..) as although they are more expensive than the AI inference providers they provide increased performance.

- As an organization evolves, hybrid systems should be evaluated as the more advanced the AI requests the more tokens used and portions of the task (or function) may be able to be pushed to the less costly options like AI inference providers. Mixture-of-Expert (MoE) models should be evaluated as well.

- Use AI as a means of cost reduction and a growth enabler. AI will open up time for individuals to focus on higher value tasks and as the workforce evolves so will the organization.

- If your career or aspiration is in the top half of the Anthropic AI industry impact chart above, spend time learning how to use AI. Most will not need to know how to build AI systems but those who know how to use AI to make themselves more efficient will be more valuable to their organization.